Negative AI ROI Is Usually an Operating-System Problem

When a CRO or CFO says the AI budget is not paying back, the instinct is usually to blame the tool. Sometimes that is correct. More often, the tool is exposing a control problem that already existed inside the revenue system.

HubSpot benchmark: 28% of sales leaders report negative ROI from AI tools[1]. Validity 2025 benchmark: 45% of companies say their CRM data is not prepared for AI[2]. Put those two facts together and the result is less surprising than the marketing suggests. Many teams are buying automation on top of records they would not trust in a board forecast.

Define ROI Before You Quote the Problem

If leadership is going to discuss AI ROI seriously, the metric basis needs to be explicit. Otherwise the company is only debating anecdotes.

- Time window: monthly, quarterly, or trailing 12-month return.

- Benefit basis: rep time recovered, support volume deflected, conversion lift, forecast-labor reduction, or headcount avoided.

- Cost basis: software spend, implementation effort, training time, workflow redesign, and exception handling after launch.

- Evidence threshold: observed operating change versus modeled expectation.

Without that definition box, "negative ROI" can mean anything from "the team dislikes it" to "the workflow costs more to run than it saves." Those are different problems and should not be managed as one category.

Why the Tool Fails Before the Model Does

Most negative-AI-ROI stories start earlier than prompt quality or model quality. They start when the operating inputs are weak.

- The CRM is not governable. Account ownership is unclear, stages are subjective, renewal fields are incomplete, or billing reality does not reconcile back to the pipeline.

- The workflow was never standardized. Teams try to automate activities that still vary by rep, manager, or segment, which means the tool inherits inconsistency rather than reducing it.

- No one owns the exceptions. False positives, bad triggers, and poor summaries show up immediately after launch. If there is no cadence to review them, trust collapses fast.

- The buying case was headcount fantasy. Leadership expected the tool to replace process discipline rather than compound it.

That is why a tool can demo well, get deployed quickly, and still fail economically. The company did not buy software into a controlled operating environment. It bought software into ambiguity. The governance prerequisites described in the AI sales automation readiness checklist exist precisely to prevent this sequence.

Where the Benchmark Actually Points

The 28% negative-ROI benchmark should not be read as "AI does not work." It should be read as a warning that deployment quality is uneven. The Validity benchmark points in the same direction: nearly half of companies say their CRM data is not prepared for AI[2]. When that is the baseline, the median implementation problem is not model access. It is revenue-system readiness.

That also explains why the strongest published automation evidence tends to show up in support and tightly scoped workflows before it shows up in complex early-stage sales motions. Structured work with explicit rules is easier to automate than a CRM full of subjective stage changes and stale opportunity data.

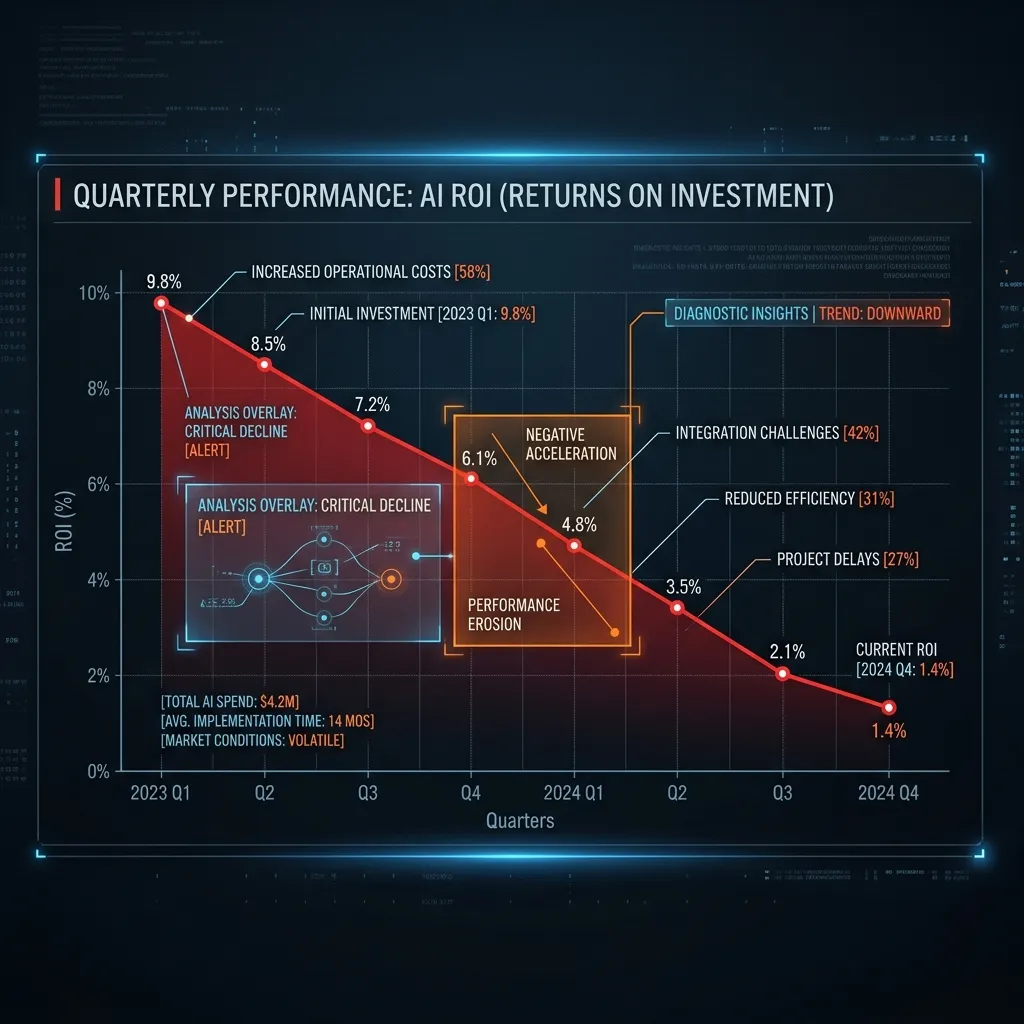

An Illustrative ROI Failure Example

Illustrative example: a 10-rep team buys an AI workflow expecting time savings to offset the spend. Three months later, the team is still correcting summaries, ignoring routed tasks, and manually fixing fields the workflow updates incorrectly. The software cost is visible immediately. The labor saved is theoretical because the operating process never stabilized enough to trust the output.

In that scenario, the problem is not that automation has no value. The problem is that the company tried to monetize time savings before it had governed the conditions required to produce them.

The Better Diagnostic Question

Instead of asking "which AI tool should we buy?", leadership should ask four narrower questions first:

- Can we trust the active revenue records? The CRM, billing view, and renewal view should not contradict each other materially.

- Are stage exits based on observable evidence? Automation on top of subjective pipeline movement simply scales weak judgment.

- Do we have one owner for workflow exceptions? Someone must review bad outputs, bad triggers, and process drift after launch.

- Have we defined how ROI will be measured? The board should not hear "AI is working" without a stated time window and benefit basis.

If the answer to those questions is mostly no, the immediate need is not more AI experimentation. It is controls work.

What the Correct Sequence Looks Like

For MxM Revenue Engineering, the sequence is strict for a reason. The Forecast Integrity Scorecard diagnoses whether the revenue system is stable enough to support automation. Controls Install turns the key rules into enforceable operating behavior. Governance keeps those rules alive long enough for the business to trust the outputs. Only then does AI Revenue Engineering make economic sense as a second-layer offer.

That order is less exciting than a tool-first launch story, but it is much closer to how durable ROI is actually created. Clean records, explicit review cadence, reconciled revenue logic, and one definition of the number make automation more useful. Without those, the business usually ends up paying for an AI layer and a manual correction layer at the same time.

The Board-Level Interpretation

A board should not hear "we are investing in AI" as a strategy line by itself. It should hear whether the company has created the control environment required for that investment to produce a measurable operating return. If the answer is no, negative ROI is not a surprise. It is the expected result of buying automation ahead of governance. The same disciplines that drive forecast accuracy — stage hygiene, version control, reconciliation logic — are the exact controls that make AI outputs trustworthy.

The useful takeaway is not to become anti-AI. It is to stop treating deployment discipline as optional. The tool fails before the model does because the business asks it to operate inside a revenue system that still cannot explain its own numbers.