AI Sales Automation Is a Sequence Problem

Most discussions about AI sales automation start with the tool. That is backwards. The real question is whether the operating data is trustworthy enough for automation to act on. If stages are loose, account records are incomplete, and billing or renewal reality is disconnected from the CRM, the company will still get AI outputs. It just will not get outputs it can trust.

That is why the issue is not whether automation is useful. It is whether the business is ready to automate on top of the records it already has. At Series A-B, that distinction matters because most of the value case depends on workflow quality rather than model novelty.

Why the Economics Still Matter

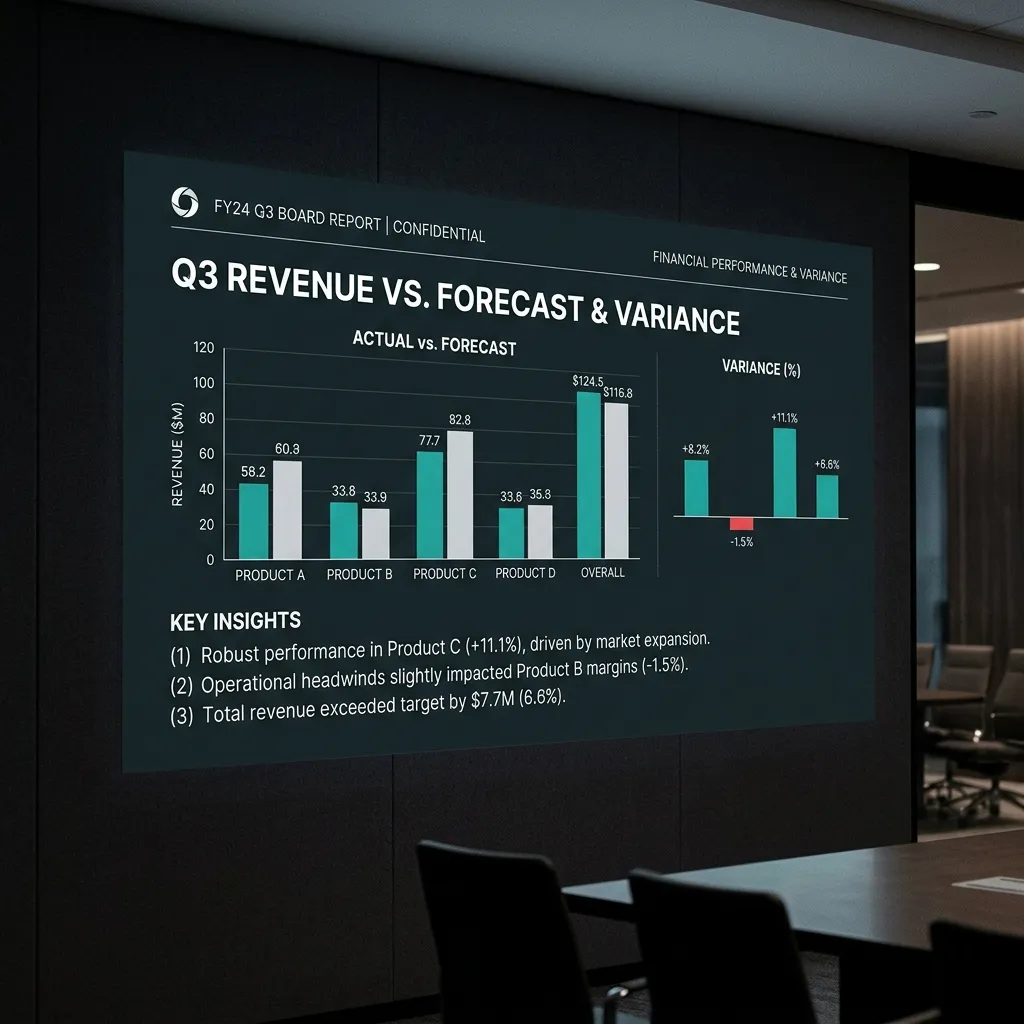

Research benchmark: sales teams continue to spend a large share of time on admin, data entry, internal coordination, and preparation rather than active selling[1]. That makes automation economically attractive even before it makes forecasting or governance sense. If the team can reclaim meaningful rep time from repetitive work, capacity improves without adding the same amount of headcount.

Illustrative example: on a 10-rep team, even modest time recovery can create the equivalent of added selling capacity without hiring another fully ramped rep. The exact ROI will vary by process quality, compensation structure, and how much of the workflow is genuinely automatable, but the pressure to automate is rational.

Where the Evidence Is Strongest

The strongest published AI automation evidence today tends to sit in support and other structured workflows. Those environments have clearer inputs, narrower failure modes, and more repetitive transactions than a complex B2B sales motion. That is why support automation benchmarks often look stronger than AI SDR or deal-inspection case studies.

Research benchmark: support organizations with clean documentation and well-structured account context can automate a meaningful share of tier-one volume[2]. The exact range depends on documentation quality, process design, and how narrowly the workflow is scoped. The lesson for sales teams is not that support and sales are the same. It is that AI works best where the operating inputs are structured and the failure modes are explicit.

Why AI Sales Agents Underperform Early

At Series A-B, the blockers are usually operational, not philosophical.

- CRM readiness is weak. AI outreach, scoring, routing, and summarization all depend on account, contact, stage, and timing fields being current enough to support action.

- Stage movement is not governed. If the CRM reflects rep optimism more than observable evidence, automation simply accelerates weak judgment. Stage-exit controls must come before any AI layer that depends on stage data.

- Tool economics are front-loaded. The subscription starts immediately while the governance capability required to make the tool useful is still missing.

- Compliance and trust still apply. Automated actions touching prospect or customer records inherit the same privacy and internal-control requirements as any other operating system.

None of that means AI sales automation is a bad idea. It means the business should stop treating the tool purchase as the first implementation milestone.

The Readiness Standard Before Deployment

Before layering AI onto the GTM system, leadership should be able to answer a short readiness checklist.

- Can the company trust the active pipeline? Close dates, stages, owners, and amounts should hold up under weekly review.

- Are stage exits tied to observable evidence? Automation should not depend on subjective pipeline movement.

- Can Finance and GTM reconcile the number? If billing and renewal reality routinely diverge from CRM expectations, the AI layer will inherit the same distortion. A CRM-to-cash reconciliation review answers this question before the AI subscription starts.

- Is there a review cadence for exceptions? Someone needs to own bad records, bad triggers, and false positives after launch.

If the answer to those questions is mostly no, the company is not blocked from AI forever. It is being told the order of operations.

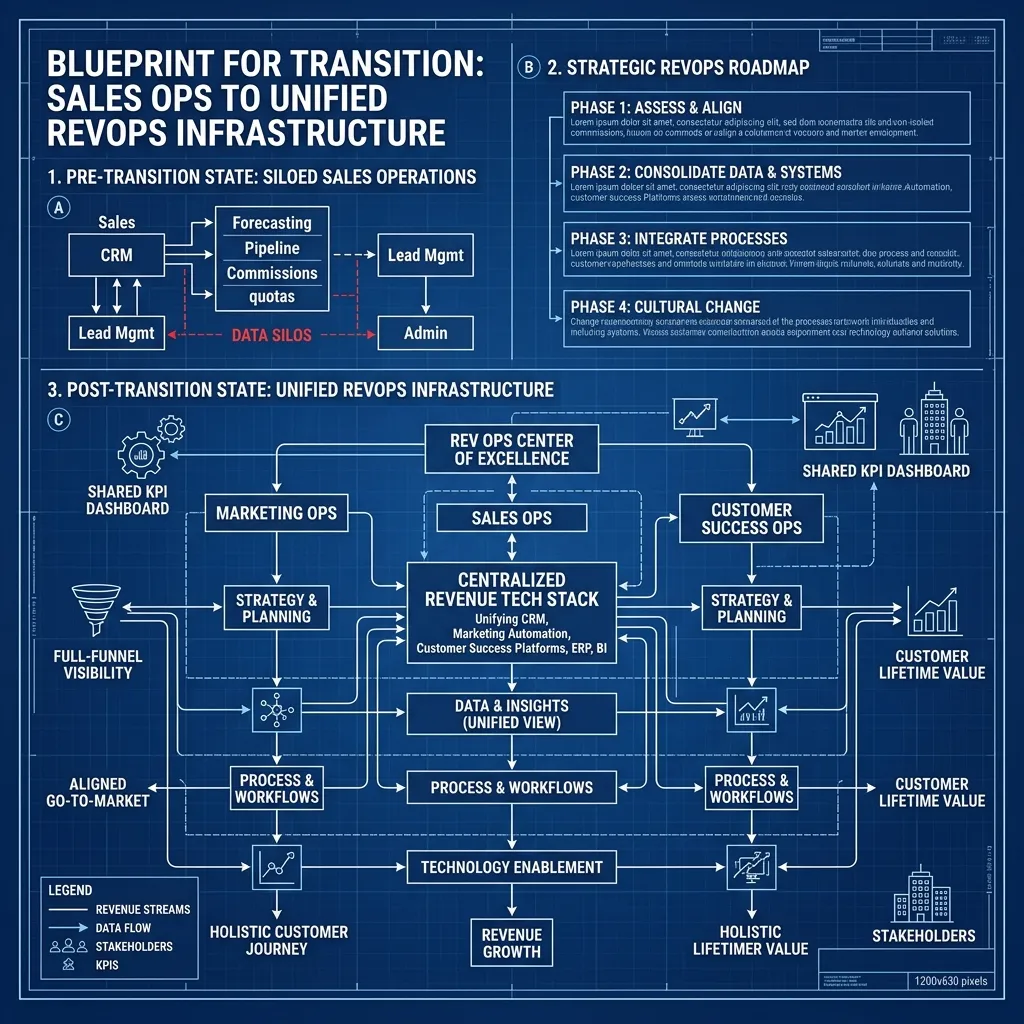

What Comes First in the MXM Sequence

This is where MxM Revenue Engineering is intentionally strict. The Scorecard identifies whether the foundation is stable enough to support automation. Controls Install enforces the stage discipline, hygiene checks, reconciliation logic, and review rhythm that make the CRM governable. Governance keeps those controls alive once the initial cleanup is finished.

Only then does AI Revenue Engineering become a serious second-layer offer. That is why the public ladder is Scorecard, then Controls Install, then Governance, then AI. The automation is not the foundation. It is the multiplier applied after the foundation is trustworthy.

What Good Looks Like After the Foundation Exists

Once the data is governed, the same tools start producing more useful outcomes. Lead routing follows real account context. Rep summaries update fields the team actually trusts. Support deflection improves because documentation is maintained. Forecast-adjacent signals become more reliable because the operating system is no longer arguing with itself.

Research benchmark: the companies reporting the best AI automation outcomes are usually the same companies with stronger documentation, tighter governance, and more disciplined operating cadences. The lesson is not "buy better AI." It is "make the underlying system reliable enough that automation has something coherent to work with."

The Decision Question

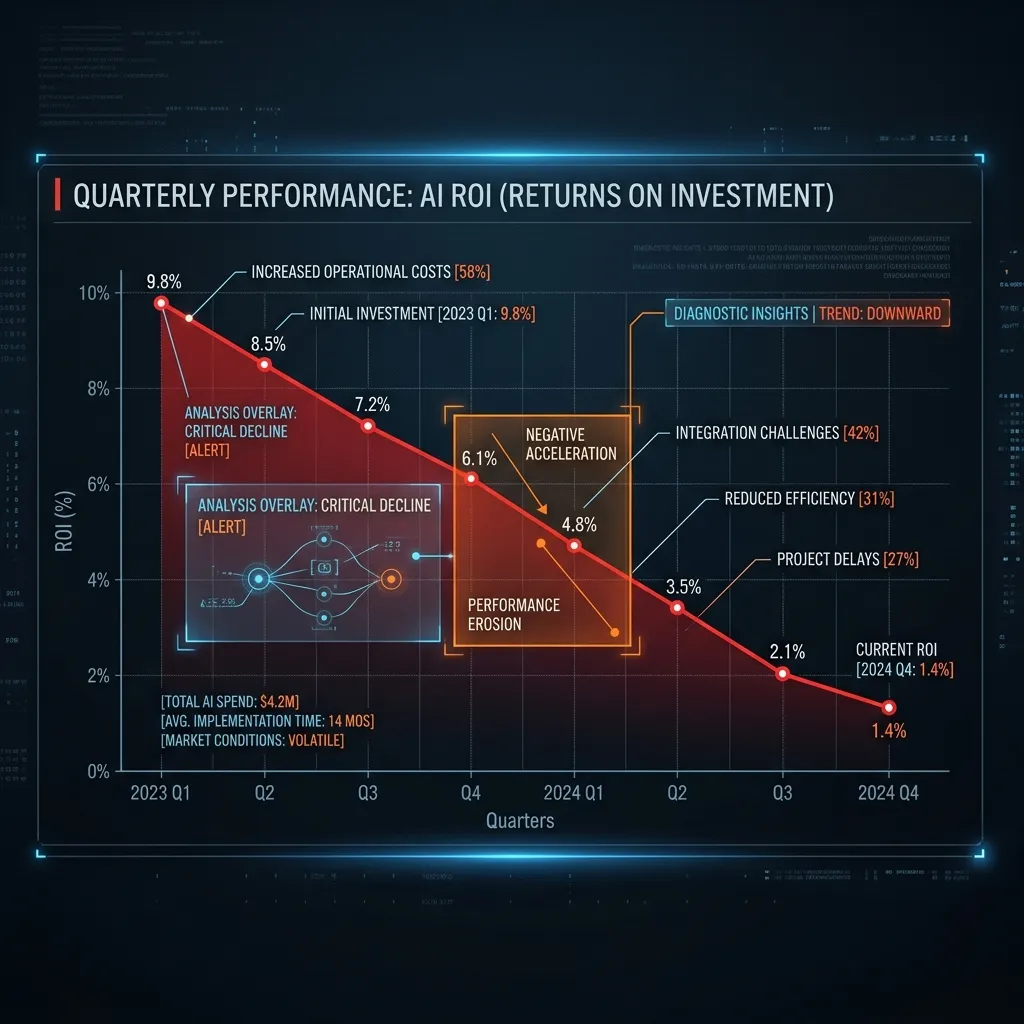

The decision is not whether AI belongs in the GTM stack. It does. The decision is whether leadership wants to buy the tool first and discover the control problem later, or diagnose the control problem first and give the tool a realistic chance to work. The pattern of buying before governing is the core reason AI negative ROI is as common as it is.

For most $5M-$20M ARR SaaS teams, that is the difference between a useful automation layer and another subscription the board will eventually ask you to justify.