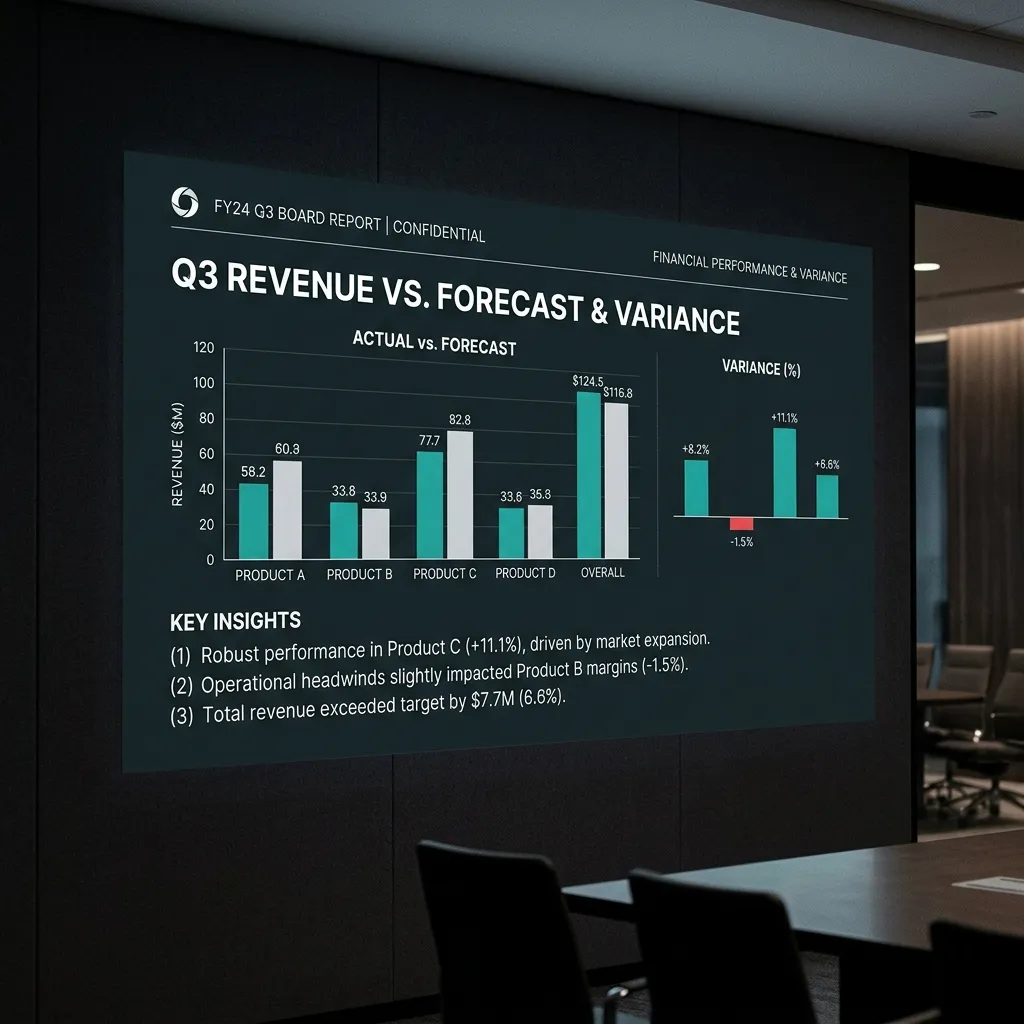

Most Forecasts Understate Renewal Risk Until It Is Too Late

Forecast reviews usually focus on new bookings, pipeline coverage, and close-date movement. Renewal risk gets pushed into a separate customer success conversation, often with its own spreadsheet, health score, and language. That separation is one of the reasons board forecasts break. If a meaningful share of next quarter's number depends on renewals, churn, contraction, or delayed expansion, retention is not a post-sale issue. It is part of the forecast.

The pattern is common in $5M-$50M ARR SaaS companies. Open pipeline is reviewed weekly. At-risk renewals are reviewed later, with less rigor, and often through subjective account notes rather than the same operating discipline applied to new business. By the time Finance sees the gap, the problem is no longer "customer success risk." It is a forecast miss — and the credits and write-offs that follow are often the largest single source of revenue leakage in the quarter.

Define the Metrics Before You Report Them

If you are going to use retention metrics in a board deck, define them first. The editorial and metrics research behind MxM Revenue Engineering's content standard is explicit on this point: NRR and GRR are only useful when the cohort, period, and revenue basis are clear.

- GRR (Gross Revenue Retention) shows how much recurring revenue from an existing cohort remained before expansion is counted.

- NRR (Net Revenue Retention) adds expansion and subtracts contraction and churn for that same cohort.

- Minimum definition standard: state whether you are using ARR or MRR, whether the period is monthly, quarterly, or annual, and whether reactivations, price uplifts, and non-recurring revenue are included.

Without that definition box, retention reporting looks precise while remaining non-comparable. A leadership team can say "NRR is healthy" and still be hiding contraction, delayed renewals, or one-time pricing changes inside the number.

Where Renewal Forecasts Usually Break

The failure mode is rarely that a team has no account data. The failure mode is that the data being used to call risk is not the data that actually predicts the commercial outcome. In practice, three gaps show up repeatedly.

- Health scores are subjective. A manually maintained red-yellow-green field in the CRM is useful for account triage, but weak for financial forecasting if it is not tied to observable signals.

- Renewal mechanics are incomplete. Renewal date, notice period, pricing change, open ticket burden, unresolved implementation issues, and usage decline often live in different systems or not at all.

- Expansion and contraction are blended too late. Teams report one renewal number without clearly separating likely renewals, likely downsells, and upside from expansion.

That is why many renewal forecasts feel stable until the last 30-45 days. The issue is not that churn came from nowhere. The issue is that the company did not have a shared operating view of risk early enough to model it.

What to Track 90 Days Before Renewal

A better approach is a renewal risk ledger. This is not a complicated new platform. It is a shared review object that assigns every meaningful renewal to a status, an owner, a renewal date, and a concrete risk basis. The point is not to predict the future perfectly. The point is to stop letting "likely renewal" mean whatever the room wants it to mean.

- Commercial facts: contract end date, notice date, current ARR, renewal amount, known expansion or contraction scenario.

- Observable account signals: product usage trend, support ticket pattern, executive sponsor activity, open escalations, implementation debt.

- Operating assessment: risk status, confidence level, mitigation owner, and next review date.

Once that ledger exists, Finance, CS, and GTM leaders can review the same book of business with the same language. That is the difference between a retention narrative and a retention forecast.

How This Connects to Forecast Integrity

Retention discipline belongs in the same control environment as pipeline discipline. The operating question is the same in both cases: what evidence exists for the number currently in the forecast? For new business, that means qualification, stage-exit criteria, and close-date hygiene. For renewals, it means cohort definitions, commercial dates, and risk signals that are visible before the account slips. The same forecast accuracy problems that arise from weak pipeline hygiene appear in equal measure when renewal visibility is incomplete.

This is also where MxM Revenue Engineering's offer sequence matters. A Forecast Integrity Scorecard should not only inspect pipeline mechanics. It should also show whether the company is understating renewal risk, overcounting expansion, or mixing incompatible definitions of NRR and churn. The Controls Install then turns that diagnosis into a repeatable review cadence. Governance keeps the definitions and reporting clean quarter after quarter.

The Board-Level Standard

A board-defensible forecast does not treat renewals as "expected unless flagged." It shows the retained base, the at-risk base, the likely contraction, and the expansion assumption explicitly. It also tells the board how the company is defining NRR and what has changed in that definition since the last review, if anything.

That standard is harder than maintaining a green health field in the CRM. It is also materially more useful. When retention is reviewed with the same discipline as new business, forecast surprises tend to shrink, cross-functional debates get shorter, and the board conversation moves from "why did this appear so late?" to "what are we doing about the accounts we already know are at risk?"